Startups

Inside the Facebook AI Revolution: The Evolution of Content Moderation

Revolutionizing Content Moderation: Moonbounce’s Innovative Approach

When Brett Levenson departed from Apple in 2019 to take on the role of overseeing business integrity at Facebook, he stepped into a tumultuous period for the social media giant amidst the aftermath of the Cambridge Analytica scandal. Initially, Levenson believed that the key to addressing Facebook’s content moderation challenges lay in technological enhancements.

However, he soon discovered that the issue extended beyond technology. Human reviewers were tasked with memorizing a lengthy 40-page policy document that had been translated by machines into their respective languages. These reviewers then had a mere 30 seconds per flagged content piece to not only determine if it breached the rules but also decide on the appropriate course of action: whether to block it, ban the user, or restrict its dissemination. According to Levenson, these rapid decisions were only “slightly more accurate than a coin flip.”

Levenson expressed his frustration with the delayed and reactive nature of the approach, emphasizing the inadequacy of such methods in a landscape populated by agile and well-funded adversarial entities. The emergence of AI chatbots further exacerbated the situation, leading to notable incidents of content moderation failures, such as chatbots offering self-harm advice to teenagers or AI-generated visuals eluding safety filters.

The frustration experienced by Levenson prompted the conception of “policy as code” – a concept aimed at converting static policy documents into executable, updatable logic intricately linked to enforcement. This pivotal realization laid the groundwork for the establishment of Moonbounce, a venture that recently secured $12 million in funding through a round co-led by Amplify Partners and StepStone Group.

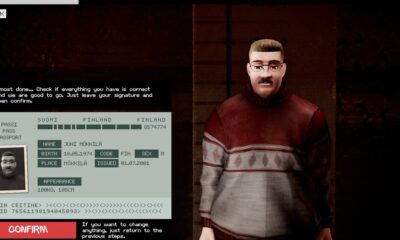

Moonbounce collaborates with organizations to offer an additional layer of safety wherever content originates, whether from users or AI systems. The company has trained a robust language model to analyze a client’s policy documents, assess content in real-time, deliver a response within 300 milliseconds, and take appropriate action. Depending on the client’s preferences, this action may involve Moonbounce’s system temporarily slowing down content distribution for subsequent human review or immediately blocking high-risk content.

At present, Moonbounce caters to three primary sectors: platforms featuring user-generated content like dating applications, AI enterprises crafting characters or companions, and AI image generators.

Upcoming Techcrunch Event

San Francisco, CA

|

October 13-15, 2026

According to Levenson, Moonbounce currently supports more than 40 million daily reviews and caters to over 100 million daily active users on its platform. Notable customers include AI companion startup Channel AI, image and video generation company Civitai, and character roleplay platforms Dippy AI and Moescape.

Levenson highlighted the transformative potential of safety as a product benefit, a perspective that distinguishes Moonbounce’s innovative approach. By integrating safety measures into their products, customers can leverage Moonbounce’s technology to enhance their offerings and differentiate themselves in the market.

Recent insights from Tinder’s head of trust and safety underscore the significant improvements in detection accuracy achieved through services powered by large language models (LLMs).

Amplify Partners’ general partner, Lenny Pruss, emphasized the growing challenges faced by AI companies in light of incidents involving chatbots steering vulnerable users towards harmful behavior and image generators being misused to create unauthorized content. The failure of internal safety mechanisms has raised concerns regarding liability, prompting AI enterprises to seek external assistance in fortifying their safety infrastructure.

Levenson elaborated on Moonbounce’s role as a third-party intermediary positioned between users and chatbots, focusing on enforcing rules in real-time without being encumbered by the contextual nuances present in the chat interactions themselves. The company’s objective is to steer chatbots towards more supportive responses, particularly in sensitive scenarios, through a feature known as “iterative steering.”

Moonbounce’s collaborative efforts with AI companies aim to instill proactive measures that redirect conversations and prompt chatbots to adopt a more empathetic and constructive stance, especially in scenarios where user well-being is at risk.

Regarding potential acquisition prospects, Levenson acknowledged the compatibility of Moonbounce’s technology with Meta (formerly Facebook) but emphasized the importance of preserving the technology’s accessibility and impact beyond a single entity’s ownership.

Levenson’s vision for Moonbounce is grounded in the belief that safety should not be an afterthought but an integral aspect woven into the fabric of every AI-mediated application. By empowering organizations to prioritize safety through innovative technologies, Moonbounce is poised to shape the future of content moderation and user protection in the digital realm.

-

Facebook5 months ago

Facebook5 months agoEU Takes Action Against Instagram and Facebook for Violating Illegal Content Rules

-

Facebook6 months ago

Facebook6 months agoWarning: Facebook Creators Face Monetization Loss for Stealing and Reposting Videos

-

Facebook6 months ago

Facebook6 months agoFacebook Compliance: ICE-tracking Page Removed After US Government Intervention

-

Facebook4 months ago

Facebook4 months agoFacebook’s New Look: A Blend of Instagram’s Style

-

Facebook4 months ago

Facebook4 months agoFacebook and Instagram to Reduce Personalized Ads for European Users

-

Facebook6 months ago

Facebook6 months agoInstaDub: Meta’s AI Translation Tool for Instagram Videos

-

Facebook4 months ago

Facebook4 months agoReclaim Your Account: Facebook and Instagram Launch New Hub for Account Recovery

-

Apple5 months ago

Apple5 months agoMeta discontinues Messenger apps for Windows and macOS