Security

Lessons in Machine Trust: Insights from an AI-Generated Honeypot

The Impact of AI-Generated Code on Security: A Real-World Case Study

Utilizing AI models to assist in coding has become a common practice in modern development teams. While it can enhance efficiency, there is a risk of over-reliance on AI-generated code, potentially leading to security vulnerabilities.

The experience of Intruder serves as a practical example of how AI-generated code can impact security measures. It sheds light on the potential risks that organizations should be cautious of.

AI Assistance in Building a Honeypot

As part of delivering their Rapid Response service, Intruder established honeypots to detect early-stage exploitation attempts. When faced with the need for a customized solution, they turned to AI to aid in drafting a proof-of-concept.

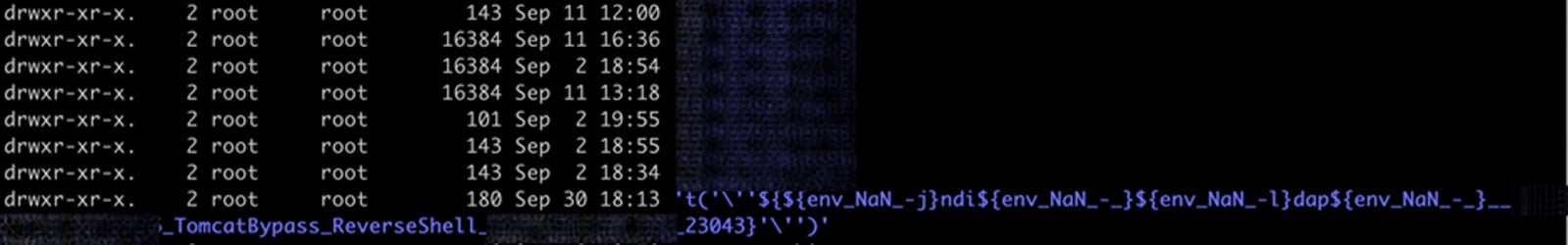

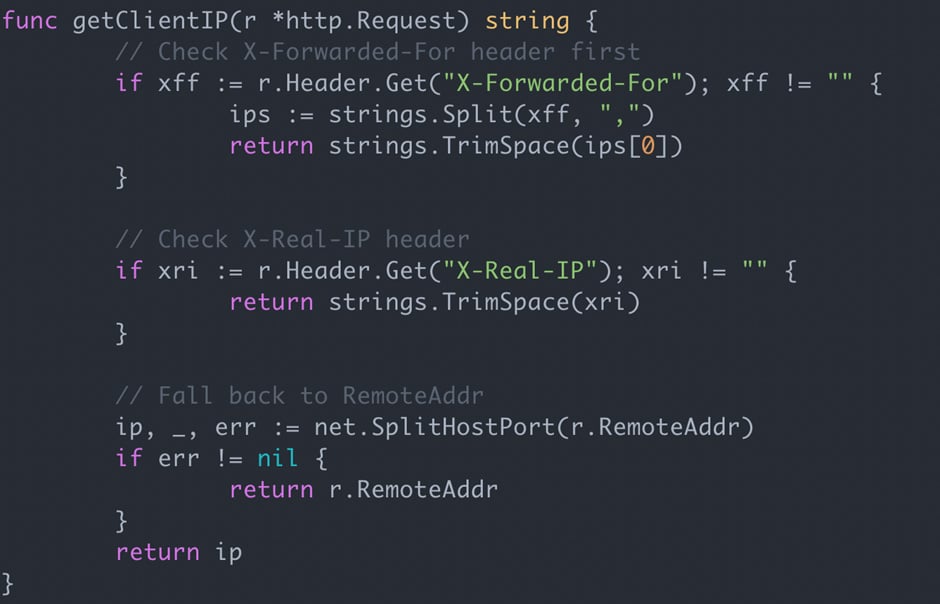

Despite deploying the code in an isolated environment, unusual activities in the logs raised concerns. An investigation revealed that the AI had incorporated logic that allowed client-supplied IP headers to be treated as the visitor’s IP, potentially exposing vulnerabilities.

Unforeseen Vulnerabilities

The examination of the code exposed a critical flaw: the AI had implemented a mechanism to interpret client-supplied IP headers as valid visitor IPs. This oversight could enable attackers to manipulate their IP addresses or inject harmful payloads.

Such vulnerabilities, if exploited, could lead to severe consequences such as Local File Disclosure or Server-Side Request Forgery.

Challenges with Static Analysis Tools

Despite running static analysis tools like Semgrep OSS and Gosec, the identified vulnerability went unnoticed. This highlights the limitations of static analysis in detecting nuanced issues like unvalidated client inputs.

Human oversight and understanding are crucial in identifying and addressing complex security flaws that AI-generated code may overlook.

Risks of AI Automation

The reliance on AI-generated code poses challenges akin to supervising automation in aviation. The mental model of how the code operates may be weaker when it is not authored directly by the developers, leading to oversight during reviews.

While AI tools can streamline development, the lack of established safety protocols in AI-generated code necessitates vigilant human intervention to ensure security.

Lessons Learned

Instances of insecure AI-generated results are not isolated incidents. Even experienced developers can overlook vulnerabilities introduced by AI models that appear confident and functional at first glance.

Organizations must exercise caution when leveraging AI in coding processes, especially when it comes to security-critical aspects.

Implications for Teams Using AI

It is advisable to restrict non-developers or non-security personnel from relying on AI for code generation. Organizations should enhance their code review procedures and detection capabilities to mitigate the risks associated with AI-introduced vulnerabilities.

The prevalence of AI-induced vulnerabilities is expected to rise, emphasizing the need for proactive measures to address potential security threats.

Discover how Intruder detects exposures before they escalate into breaches. Schedule a demo today.

About the Author

Sam Pizzey, a Security Engineer at Intruder, brings a wealth of experience in application vulnerability detection. With a background in pentesting and a focus on remote vulnerability identification, Sam is dedicated to enhancing cybersecurity practices.

This article is sponsored and authored by Intruder.

-

Facebook5 months ago

Facebook5 months agoEU Takes Action Against Instagram and Facebook for Violating Illegal Content Rules

-

Facebook5 months ago

Facebook5 months agoWarning: Facebook Creators Face Monetization Loss for Stealing and Reposting Videos

-

Facebook6 months ago

Facebook6 months agoFacebook Compliance: ICE-tracking Page Removed After US Government Intervention

-

Facebook4 months ago

Facebook4 months agoFacebook’s New Look: A Blend of Instagram’s Style

-

Facebook4 months ago

Facebook4 months agoFacebook and Instagram to Reduce Personalized Ads for European Users

-

Facebook6 months ago

Facebook6 months agoInstaDub: Meta’s AI Translation Tool for Instagram Videos

-

Facebook4 months ago

Facebook4 months agoReclaim Your Account: Facebook and Instagram Launch New Hub for Account Recovery

-

Apple5 months ago

Apple5 months agoMeta discontinues Messenger apps for Windows and macOS