Inovation

Scaling New Heights: DeepMind’s AI Agent Mastering Diverse Tasks in a Dynamic World Model

Over the past decade, deep learning has transformed how artificial intelligence (AI) agents perceive and act in digital environments, allowing them to master board games, control simulated robots and reliably tackle various other tasks. Yet most of these systems still depend on enormous amounts of direct experience—millions of trial-and-error interactions—to achieve even modest competence.

This brute-force approach limits their usefulness in the physical world, where such experimentation would be slow, costly, or unsafe.

To overcome these limitations, researchers have turned to world models—simulated environments where agents can safely practice and learn.

These world models aim to capture not just the visuals of a world, but the underlying dynamics: how objects move, collide, and respond to actions. However, while simple games like Atari and Go have served as effective testbeds, world models still fall short when it comes to representing the rich, open-ended physics of complex worlds like Minecraft or robotics environments.

Researchers at Google DeepMind recently developed Dreamer 4, a new artificial agent capable of learning complex behaviors entirely within a scalable world model, given a limited set of pre-recorded videos.

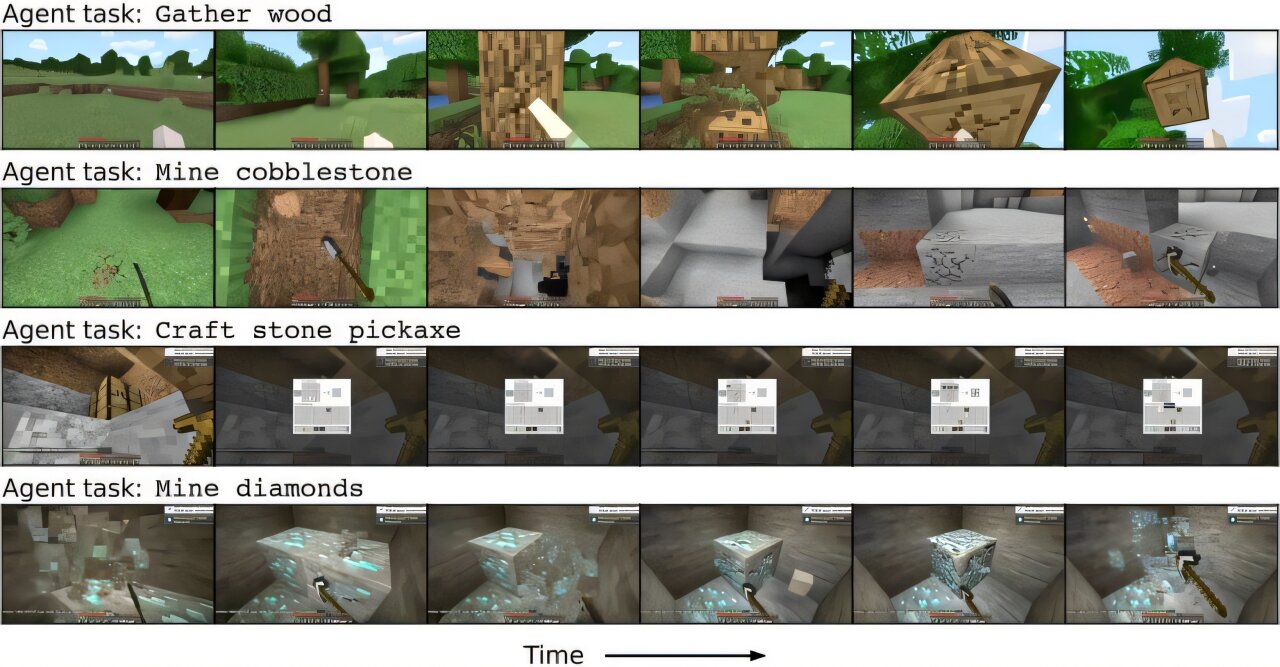

The new model, presented in a paper published on the arXiv preprint server, was the first artificial intelligence (AI) agent to obtain diamonds in Minecraft without practicing in the actual game at all. This remarkable achievement highlights the possibility of using Dreamer 4 to train successful AI agents purely in imagination—with important implications for the future of robotics.

“We as humans choose actions based on a deep understanding of the world and anticipate potential outcomes in advance,” Danijar Hafner, first author of the paper, told Tech Xplore.

However, achieving this level of success in complex worlds like Minecraft is challenging for AI agents trained solely in small world models, as they fail to capture the rich physical interactions present in such environments. This limitation makes it infeasible for applications like physical robots, which can easily break when trained directly in the physical world.

On the other hand, AI agents like Veo and Sora are making significant progress in generating realistic videos of diverse situations. Despite their advancements, these video models are non-interactive and slow in generating content, making them unsuitable as neural simulators for training agents. The goal of Dreamer 4 was to train successful agents purely within world models that can realistically simulate complex environments.

To tackle this challenge, Hafner and his team chose Minecraft as a test bed for their AI agent due to its complexity and long-horizon tasks. By training their agent solely in “imagined” scenarios within a large transformer model, they aimed to teach it to complete tasks like mining diamonds without direct practice in the game. This approach mirrors how smart robots may have to learn in simulations to prevent damage in the physical world.

The AI agent, named Dreamer 4, was trained on a dataset of recorded Minecraft gameplay videos and learned to predict future observations, actions, and rewards through reinforcement learning. The researchers designed an efficient transformer architecture and a novel training objective called shortcut forcing to enhance prediction accuracy and speed up generations by over 25 times compared to typical video models.

Overall, Dreamer 4 represents a significant advancement in training agents within scalable world models and demonstrates the potential for AI to learn complex tasks in simulated environments before applying them in the real world. This discovery showcases the agent’s capability to independently learn how to effectively solve intricate and long-term tasks.

“Learning solely offline is crucial for training robots that are susceptible to damage during physical practice,” stated Hafner. “Our study introduces a promising new method for creating intelligent robots capable of handling household chores and industrial tasks.”

During the initial tests conducted by the researchers, the Dreamer 4 agent demonstrated accurate predictions of various object interactions and game mechanics, thereby developing a dependable internal world model. This model surpassed the performance of earlier agents by a significant margin.

“The model enables real-time interactions on a single GPU, allowing human players to explore its simulated world and test its abilities,” mentioned Hafner. “We observed that the model accurately predicted the dynamics of mining and placing blocks, crafting simple items, and utilizing doors, chests, and boats.”

Another advantage of Dreamer 4 is its exceptional performance despite being trained on a minimal amount of action data, primarily video footage illustrating the effects of different key and mouse button inputs within the Minecraft game.

Furthermore, Hafner and his team at DeepMind aim to enhance Dreamer 4’s world model by incorporating a long-term memory component. This recent development could significantly contribute to advancing robotics systems and streamlining the training of algorithms necessary for completing manual tasks in the real world.

-

Facebook5 months ago

Facebook5 months agoEU Takes Action Against Instagram and Facebook for Violating Illegal Content Rules

-

Facebook6 months ago

Facebook6 months agoWarning: Facebook Creators Face Monetization Loss for Stealing and Reposting Videos

-

Facebook6 months ago

Facebook6 months agoFacebook Compliance: ICE-tracking Page Removed After US Government Intervention

-

Facebook4 months ago

Facebook4 months agoFacebook’s New Look: A Blend of Instagram’s Style

-

Facebook4 months ago

Facebook4 months agoFacebook and Instagram to Reduce Personalized Ads for European Users

-

Facebook6 months ago

Facebook6 months agoInstaDub: Meta’s AI Translation Tool for Instagram Videos

-

Facebook4 months ago

Facebook4 months agoReclaim Your Account: Facebook and Instagram Launch New Hub for Account Recovery

-

Apple5 months ago

Apple5 months agoMeta discontinues Messenger apps for Windows and macOS