Security

Guardian of Cybersecurity: Puneet Bhatnagar’s Innovator Spotlight

AI Agents, Denominator Problems, and the New Authority Control Plane: Why Identity Governance Has to Grow Up Fast, and the Guy Who Can Get You There

If you have been in cybersecurity long enough to remember when identity was a sleepy compliance checkbox, you are exactly the audience for what Puneet Bhatnagar is worried about right now.

Puneet describes himself as an identity security and cyber risk expert, someone who has spent almost two decades in the weeds of Identity Governance and Administration. In our conversation, you could hear both fatigue and excitement in his voice. Fatigue with the way enterprises still treat identity as an afterthought. Excitement because AI is finally forcing everyone to admit that the old model is cracking.

“Lot of the breaches that are occurring have some sort of a root or origin in mismanaged Identity Governance,” he says. That is the quiet part most boards prefer not to say out loud. We keep buying shiny threat intel feeds while our identity stack looks like a garage full of mismatched parts.

And now AI has rolled into that garage like a race car you are supposed to drive at 200 miles an hour on day one.

According to Puneet, if CISOs do not change how they think about identity, the combination of legacy access controls and autonomous AI agents is going to become the perfect storm.

AI for Security vs Security for AI

Puneet frames the current conversation in two lenses: security for AI and AI for security.

“There’s AI, there’s security for AI and there’s AI for security,” he says. “Security for AI is where a lot of the press and the conversations seem to be anchored around. There’s a lot of people talking about, hey, how do we secure AI? And that’s a super important question.”

If you went to RSAC or any major conference this year, you saw the security for AI story everywhere: model theft, prompt injection, data leakage. It is table stakes.

But Puneet argues the second lens is badly underdeveloped.

“You cannot fight like modern warfare with sticks and stones,” he says. “We need to leverage AI just as well to think about almost like evolved or new architecture that needs to then be created to start governing AI effectively and use cybersecurity to do that.”

In other words, AI is not just a new thing to protect. It is also the only realistic toolset we have to cope with the identity explosion it is causing.

Identity has been “the new perimeter” since 2012. How is that going?

If you feel like you have been hearing “identity is the new security perimeter” for a decade, you are not imagining it.

“When you go back to a lot of conversation has been around identity becoming the new security perimeter,” Puneet recalls. “When I was looking it up, 2012 is when that was first mentioned. Really 2012 is identity being the security perimeter.”

Twelve years later, most practitioners would struggle to say they actually have identity as their effective perimeter. The marketing slides got ahead of the implementation.

Despite all the buzzwords zero trust, identity perimeter, continuous access evaluation Puneet says enterprises still do not have true identity attack surface management.

“The first big gap I see is that we haven’t, despite all the buzz words around identity being the security perimeter – zero trust architecture and whatnot – we haven’t really gotten to the point as practitioners where we feel that the enterprises we represent are fully covered from an identity attack surface management perspective.”

Why? Because identity governance was boxed into the role auditors cared about, not the role attackers exploit.

“Traditionally, identity governance was seen more of as a compliance function. Hey, I need to pass my audits, get me clear on my SOX user access controls or HIPAA controls,” he says. “There was a certain scope that audits were limited to. It was maybe 15, 20 percent of your applications.”

The early IGA platforms built around that world did exactly what they were paid to do. They connected to a subset of key systems, made sure compliance reports came out the other end, and everyone chalked it up as a win.

From a threat perspective, that is a polite way of saying your denominator was always wrong.

The “denominator problem” and why coverage is the real control

Puneet calls this the denominator problem, and it is at the heart of his current thesis.

“If you think about SOC teams and security operations teams primarily relying on some form of control as a perimeter, you cannot rely on a control that doesn’t provide full coverage of your attack surface,” he explains.

He argues that identity teams have been governing a slice of the environment and pretending it is the whole. The result:

- Partial and incomplete attack surface coverage

- A false sense of comfort because audits “pass”

- A control fabric that attackers can route around

On top of that, the underlying technology landscape has never been friendly to broad, consistent identity governance.

“For single sign on, people rely on standards like SAML, OAuth and whatnot, but that’s identity verification,” he points out. “It’s not the access governance side. Access governance side, there are no standards. It’s the Wild Wild West. Someone calls it RBAC, someone calls it ABAC. And there is still an evolving area.”

For CISOs, that should sound familiar. Every major app has its own access model and permissions soup, and your IGA stack has to brute force its way into each one.

Puneet’s argument is that before you try to do anything sophisticated with AI, least privilege, or zero trust, you have to fix the denominator.

“We first need to think about what our denominator is, aka our attack surface, and start leveraging AI to build a complete coverage of that attack surface first,” he says. “It starts with that layer of deep, complete, rich identity observability.”

From governing access to governing decisions

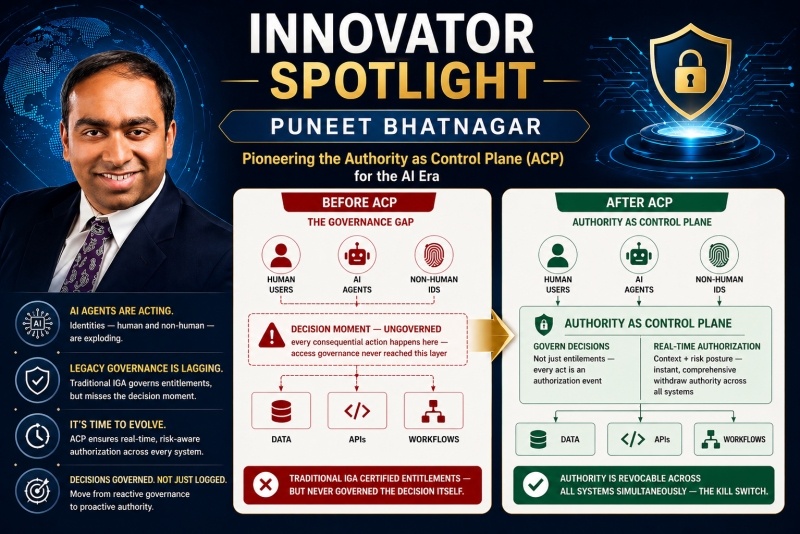

Here is where his thinking diverges from traditional IGA.

Visibility and coverage are just the beginning when it comes to understanding your full identity attack surface. The uncomfortable truth is that current governance focuses on what people are allowed to do, rather than what they actually do.

Puneet emphasizes that governing access is not the same as governing decisions. In the past, decisions were made by humans and only the outcomes were recorded. However, with the rise of AI, decisions are now being made by AI agents across various applications and workflows.

Boards are starting to realize the implications of this shift, questioning the whereabouts of AI agents and the existence of an AI kill switch. Without knowing where AI agents are acting, what decisions they are making, and under what authority they are acting, implementing an AI kill switch becomes impossible.

Puneet introduces the concept of an “authority control plane” to address this issue. This involves continuously verifying whether an agent, human or machine, is authorized to make a specific decision in a specific context.

He predicts that the next 6 to 12 months will involve restructuring within enterprises to accommodate AI governance horizontally across multiple C-level roles. This shift will require collaboration between CISOs, divisional CTOs, and enterprise technology CTOs to develop a comprehensive AI governance strategy.

As the market responds to this shift, startups and established players are working to define a platform layer for AI governance. This includes creating agents, monitoring and logging their actions, and integrating them into the identity fabric.

One of the key challenges highlighted by Puneet is the speed at which AI agents operate. Unlike humans who may take days to make decisions, AI agents act at machine speed, requiring a runtime architecture to support real-time authorization.

Existing players and newcomers in the field are exploring ways to adapt to this new reality, with some startups using AI to learn application access models from observed behavior. This innovative approach represents a significant departure from traditional methods of access management.

Overall, the conversation around AI governance is evolving rapidly, with a focus on addressing the cost implications of implementing AI technologies in enterprises. As organizations navigate this new landscape, it is crucial to stay ahead of the curve and adopt a proactive approach to AI governance.

Puneet’s Insights on AI Governance and Financial Operations

As Puneet reflects on the early days of cloud adoption, he draws strong parallels to the current state of AI governance. He emphasizes the importance of implementing guardrails to prevent the AI equivalent of a “surprise seven-figure cloud bill” as enterprises increasingly deploy agents without proper oversight.

According to Puneet, AI governance should encompass not only security and identity management but also financial operations. He highlights the necessity of ensuring that the deployment of AI agents does not lead to budgetary constraints, drawing a direct comparison to the challenges faced during the emergence of cloud platforms like AWS and Azure.

Furthermore, Puneet warns against the risks associated with immature governance practices, which can result in the accumulation of excessive costs. The deployment of invisible agents operating within opaque billing models poses a significant threat to the financial stability of organizations, a concern that should not be overlooked by CISOs and CIOs.

Key Recommendations for CISOs

In light of these challenges, Puneet advises CISOs and senior security leaders to take proactive steps to enhance their AI governance practices. Instead of hastily adopting new AI governance platforms, he suggests a more strategic approach to address the shortcomings of existing identity controls in the evolving technological landscape.

- Enhance Identity Observability: CISOs should focus on gaining a comprehensive understanding of their organization’s identity attack surface, leveraging AI to achieve deep visibility across human users, machine identities, and AI agents.

- Transition to Decision-Centric Governance: It is essential to shift from access-centric to decision-centric governance, prioritizing the identification of decision-makers and ensuring proper authorization for critical workflows.

While there may not be an immediate solution available, CISOs can begin restructuring their architecture and engaging with vendors to align with the principles of decision-centric governance. By embracing this approach, organizations can better manage the complexities of AI governance and mitigate potential risks.

Call to Action for CISOs

For CISOs and security leaders, the presence of AI agents within organizational environments necessitates a proactive approach to governance. It is crucial to map the identity attack surface, identify areas where AI agents are making decisions, and collaborate with vendors to implement runtime governance solutions.

- Implement Concrete Measures: CISOs should conduct a thorough assessment of their identity and security tools, pilot new solutions for improved observability, and drive cross-functional AI governance initiatives involving key stakeholders.

Puneet underscores the urgency of evolving governance practices to accommodate the increasing role of AI agents in decision-making processes. By embracing decision-centric governance and fostering collaboration across departments, organizations can effectively navigate the challenges posed by autonomous technologies.

Meet Pete Green: A Seasoned Cybersecurity Expert

As the CISO/CTO of Anvil Works, Pete Green brings over 25 years of experience in information technology and cybersecurity to his role. His diverse background encompasses technical roles such as LAN/WLAN Engineer and security leadership positions like CISO and Virtual CISO.

Throughout his career, Pete has supported clients across various industries, including government, financial services, healthcare, and technology. He holds advanced degrees in Computer Information Systems and Business Administration, reflecting his commitment to continuous learning and expertise in cybersecurity.

Transform the following:

Original: I am going to the store to buy some groceries.

Transformed: I am heading to the store to purchase groceries.

-

Facebook6 months ago

Facebook6 months agoEU Takes Action Against Instagram and Facebook for Violating Illegal Content Rules

-

Facebook7 months ago

Facebook7 months agoWarning: Facebook Creators Face Monetization Loss for Stealing and Reposting Videos

-

Facebook5 months ago

Facebook5 months agoFacebook’s New Look: A Blend of Instagram’s Style

-

Facebook7 months ago

Facebook7 months agoFacebook Compliance: ICE-tracking Page Removed After US Government Intervention

-

Facebook5 months ago

Facebook5 months agoFacebook and Instagram to Reduce Personalized Ads for European Users

-

Facebook7 months ago

Facebook7 months agoInstaDub: Meta’s AI Translation Tool for Instagram Videos

-

Facebook5 months ago

Facebook5 months agoReclaim Your Account: Facebook and Instagram Launch New Hub for Account Recovery

-

Apple7 months ago

Apple7 months agoMeta discontinues Messenger apps for Windows and macOS