AI

Google Unveils Groundbreaking AI Chips: Boosting Performance and Securing Billions in Anthropic Megadeal

Google Cloud is set to unveil its most powerful artificial intelligence infrastructure yet, introducing the Ironwood custom AI accelerator chip and expanding Arm-based computing options to meet the growing demand for AI model deployment. This move signifies a shift in focus from training AI models to serving them to billions of users, marking a significant industry transformation.

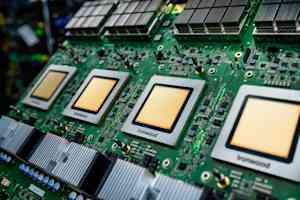

Ironwood, Google’s latest AI accelerator chip, is designed to deliver superior performance for both training and inference workloads compared to its predecessor. The chip will be generally available soon, with Anthropic, a key player in AI safety, committing to accessing up to one million of these TPU chips, making it one of the largest AI infrastructure deals to date.

Google’s decision to build custom silicon like Ironwood instead of relying solely on Nvidia’s dominant GPU chips is a strategic move aimed at achieving superior economics and performance in the long term. This approach highlights the intensifying competition among cloud providers to control the infrastructure layer powering artificial intelligence.

The announcement emphasizes the importance of serving AI models in production applications, with a focus on low latency, high throughput, and reliability. The transition from training to deployment has significant implications for infrastructure requirements, particularly in scenarios where AI systems perform autonomous actions.

Ironwood’s architecture features a massive scale, with a single “pod” connecting up to 9,216 individual chips through Google’s Inter-Chip Interconnect network. This interconnected fabric enables efficient sharing of memory and processing power among thousands of processors simultaneously, resulting in significant performance gains for AI workloads.

Anthropic’s commitment to access one million TPU chips underscores the industry’s growing demand for AI infrastructure. The company’s partnership with Google will enable it to continue scaling its compute capacity to meet the exponentially growing demand for AI models like Claude.

In addition to Ironwood, Google also introduced expanded options for its Axion processor family, which includes custom Arm-based CPUs designed for general-purpose workloads supporting AI applications. These processors complement specialized AI accelerators like TPUs, managing tasks such as data ingestion, preprocessing, and application logic in AI applications.

Google’s software enhancements aim to maximize the utilization of Ironwood and Axion chips, with tools like Google Kubernetes Engine offering advanced maintenance and topology awareness for TPU clusters. The company’s Inference Gateway intelligently load-balances requests across model servers to optimize performance and reduce serving costs.

The challenge of powering and cooling one-megawatt server racks is addressed through Google’s implementation of high-voltage DC power delivery and liquid cooling technology. The company’s collaboration with Meta and Microsoft aims to standardize electrical and mechanical interfaces for high-voltage DC distribution, supporting the increasing power demands of AI workloads.

Google’s custom silicon strategy poses a challenge to Nvidia’s dominance in the AI accelerator market, with cloud providers like Amazon Web Services and Microsoft also investing in custom silicon to differentiate their offerings. Google’s comprehensive custom silicon portfolio aims to deliver unique advantages through tight integration of hardware and software development.

As the AI industry continues to evolve, Google’s commitment to building custom infrastructure tailored for the age of inference may prove to be a strategic advantage. The company’s focus on providing customers with access to cutting-edge AI technology without the need for significant capital investment underscores its dedication to innovation in the AI space.

-

Facebook7 months ago

Facebook7 months agoEU Takes Action Against Instagram and Facebook for Violating Illegal Content Rules

-

Facebook7 months ago

Facebook7 months agoWarning: Facebook Creators Face Monetization Loss for Stealing and Reposting Videos

-

Facebook5 months ago

Facebook5 months agoFacebook’s New Look: A Blend of Instagram’s Style

-

Facebook7 months ago

Facebook7 months agoFacebook Compliance: ICE-tracking Page Removed After US Government Intervention

-

Facebook5 months ago

Facebook5 months agoFacebook and Instagram to Reduce Personalized Ads for European Users

-

Facebook7 months ago

Facebook7 months agoInstaDub: Meta’s AI Translation Tool for Instagram Videos

-

Facebook5 months ago

Facebook5 months agoReclaim Your Account: Facebook and Instagram Launch New Hub for Account Recovery

-

Apple7 months ago

Apple7 months agoMeta discontinues Messenger apps for Windows and macOS