Security

The Rise of Black Market AI Accounts: A Lucrative Underground Trade

Artificial intelligence (AI) tools have quickly become an integral part of our daily lives, fueling various tasks like content creation, software development, research, and business processes.

Popular platforms such as ChatGPT, Claude, Microsoft Copilot, Perplexity, and others are now commonly used by individuals and organizations, assisting in tasks involving internal documents, research data, software code, and other sensitive information.

Many organizations have seamlessly integrated these AI tools into their daily operations, making them not just convenient but also essential for their functioning.

As the reliance on AI services grows, so does their value, not only for legitimate users but also for the cybercrime ecosystem. Advanced AI models can significantly reduce effort, enhance output quality, and speed up tasks that previously demanded expertise or time.

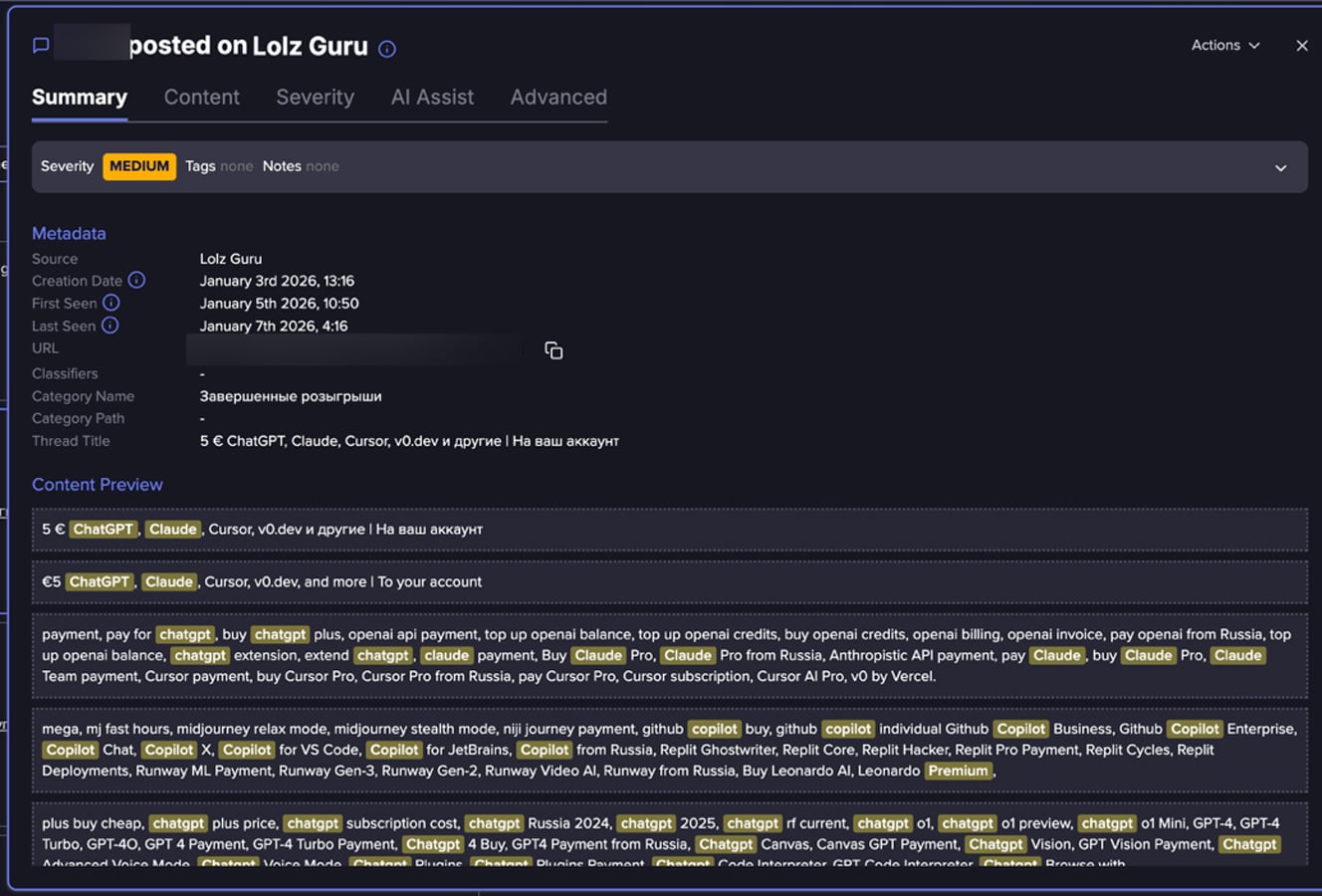

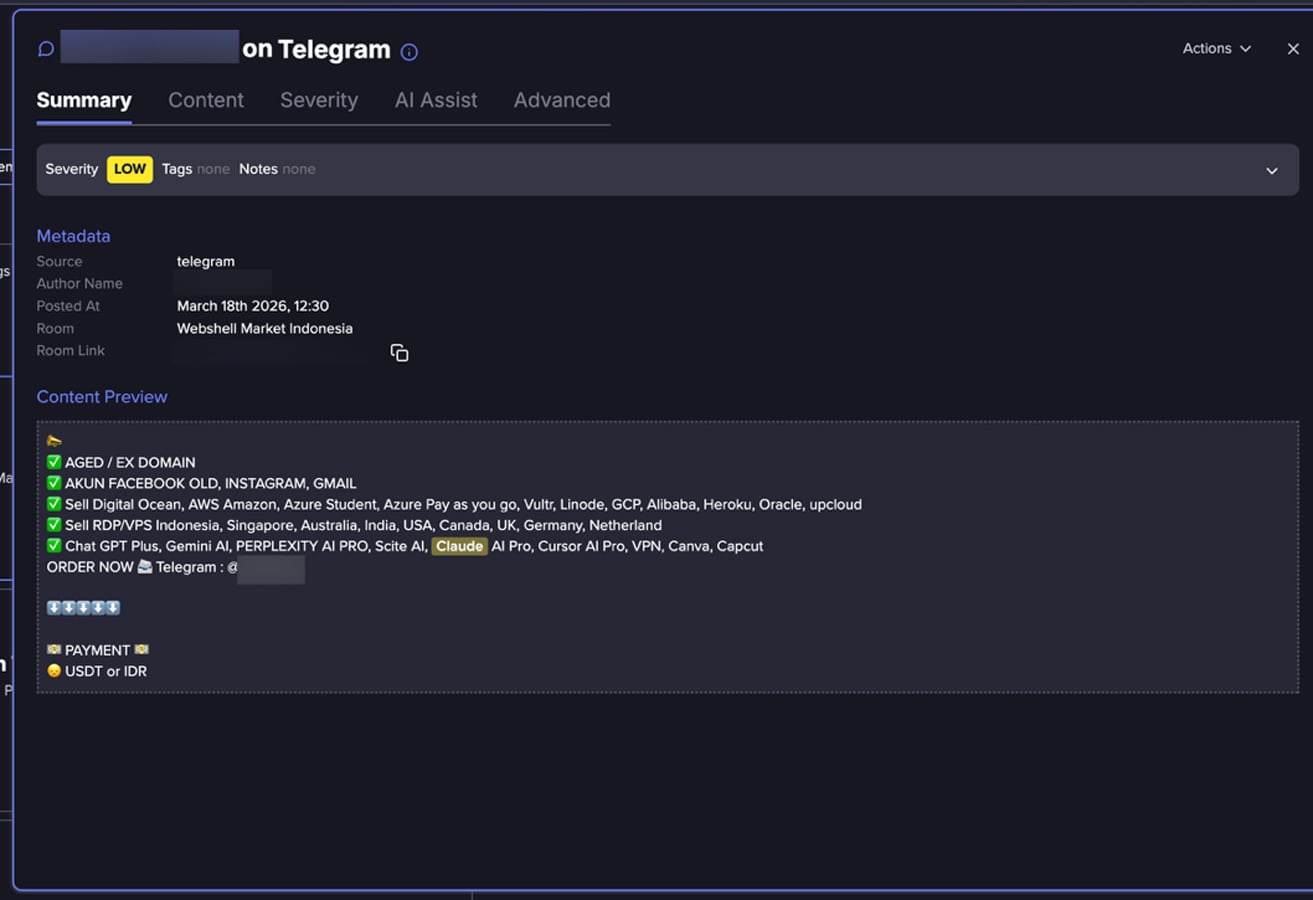

An analysis by Flare analysts of numerous posts from fraud-focused online communities reveals a burgeoning underground market centered on premium AI platform access.

Instead of isolated incidents of account misuse, the data points to a recurring pattern where access to AI platforms is continuously advertised and redistributed through resale-style listings. These listings often promote discounted subscriptions, bundled access to multiple AI tools, or usage models that claim to eliminate typical platform restrictions.

This trend hints at a broader pattern in underground markets where digital services are bundled, repackaged, and resold to a wider audience of buyers.

How Threat Actors Obtain AI Accounts?

While Flare’s researchers’ dataset doesn’t directly document acquisition methods, the data suggests several pathways:

-

Exposed keys and secrets: Recent research by Flare revealed how exposed keys can be discovered on Docker Hub.

-

Credential theft and account takeover: Listings offering aged Gmail or Outlook accounts may indicate the reuse of compromised credentials to access AI platforms.

-

Bulk account creation and verification bypass: Mentions of virtual phone numbers suggest actors create accounts at scale while circumventing verification controls.

-

Abuse of trials and promotional programs: References to gift codes or trial access suggest exploitation of onboarding incentives.

-

Shared or resold subscriptions: Some listings and discussions imply that access is shared among multiple users rather than owned by a single individual.

-

Potential API key or developer access resale: Mentions of API keys may indicate marketing of backend or programmatic access.

These methods collectively suggest a mix of account compromise, large-scale provisioning, and policy abuse.

You can monitor underground markets and Telegram channels where threat actors buy and sell stolen AI platform access—before attackers use it against your organization.

Look for your AI accounts for Free

Why Does Underground AI Access Attract Buyers?

-

Cost: Official subscriptions for premium AI services typically start at around $20 per month and can increase based on usage or enterprise features. In contrast, underground listings often highlight cheaper access or bundled offerings, indicating a significant price difference.

-

Scale: Buyers needing multiple accounts for automation, testing, or evasion may find it more convenient to purchase ready-made access instead of creating individual accounts, especially where verification and payment processes pose challenges.

-

Sanctions Bypass: In certain countries, access and payment to platforms like ChatGPT, Claude, etc., may be restricted. Underground markets provide accounts that bypass onboarding steps and provide immediate access.

-

Model restrictions: Some listings promise “fewer restrictions,” appealing to users seeking to bypass safeguards or usage limits. While these claims may seem exaggerated, they reflect the reality in underground markets where accounts or API keys are sold with reduced controls.

Flare link to post, sign up for the free trial to access if you aren’t already a customer.

How Threat Actors Are Using AI Platforms

Access to AI platforms enables a range of activities, extending beyond mere misuse of the services themselves.

In fraud scenarios, generative AI tools can generate phishing messages, scam scripts, and multilingual social engineering content at scale. AI-generated text enhances the realism and effectiveness of fraudulent communications.

Criminal groups are increasingly automating phishing and fraud operations using generative AI tools, allowing them to create convincing content with speed and sophistication, as noted in Europol’s 2025 threat assessment.

Attackers leverage AI to craft personalized social engineering campaigns, tailoring malicious messages precisely to individual targets and contexts, as reported by Palo Alto Networks’ Unit 42.

AI tools can also aid in automation, coding, and content generation, enabling actors to work more efficiently, even without extensive technical knowledge. Some platforms offer image, audio, or video generation capabilities to create synthetic content for impersonation or deception.

The Emerging Underground Market for AI Accounts

Flare’s findings indicate that threat actors and underground sellers view AI accounts as a valuable black market commodity, integrated into the ecosystem trading access, identity, and digital services. AI accounts are often featured alongside email accounts, developer tools, and verification infrastructure.

The analysis reveals various AI-related offerings, from reselling premium subscriptions to claiming unrestricted or extended access. These offers are presented in simple, product-like language, appealing to buyers without technical expertise.

Offerings include ChatGPT Plus and Pro subscriptions, Claude Pro access, Microsoft Copilot bundled with Office 365 accounts, Perplexity AI Pro, and API-related services, sometimes bundled together.

Some posts use promotional terms like “premium access,” “no limits,” or “full API access” to attract buyers seeking flexibility beyond official plans.

Flare link to post, sign up for the free trial to access if you aren’t already a customer.

This trend may democratize access and expand misuse to a wider range of actors. As AI services evolve and gain traction, their value in underground markets is poised to grow.

Addressing this shift will likely require enhanced account security, vigilant monitoring for suspicious activity, and increased awareness of how these services integrate into broader fraud ecosystems.

How Organizations Can Mitigate the Risk

-

Implement multi-factor authentication (MFA) on all AI accounts

-

Refrain from sharing sensitive data unless in approved enterprise environments

-

Monitor login patterns and usage anomalies

-

Utilize enterprise-grade accounts with robust controls

-

Regularly rotate and secure API keys

-

Monitor underground activities to identify exposed accounts, keys, and secrets

-

Educate employees on the risks of shared or purchased accounts

-

Enforce governance policies for AI tool usage

Learn more by signing up for our free trial.

Sponsored and written by Flare.

-

Facebook7 months ago

Facebook7 months agoEU Takes Action Against Instagram and Facebook for Violating Illegal Content Rules

-

Facebook7 months ago

Facebook7 months agoWarning: Facebook Creators Face Monetization Loss for Stealing and Reposting Videos

-

Facebook5 months ago

Facebook5 months agoFacebook’s New Look: A Blend of Instagram’s Style

-

Facebook7 months ago

Facebook7 months agoFacebook Compliance: ICE-tracking Page Removed After US Government Intervention

-

Facebook5 months ago

Facebook5 months agoFacebook and Instagram to Reduce Personalized Ads for European Users

-

Facebook7 months ago

Facebook7 months agoInstaDub: Meta’s AI Translation Tool for Instagram Videos

-

Facebook5 months ago

Facebook5 months agoReclaim Your Account: Facebook and Instagram Launch New Hub for Account Recovery

-

Apple7 months ago

Apple7 months agoMeta discontinues Messenger apps for Windows and macOS