The Conspiracy Theory Behind Google’s Nano Banana Pro

Unveiling the Risks of AI Content Generation

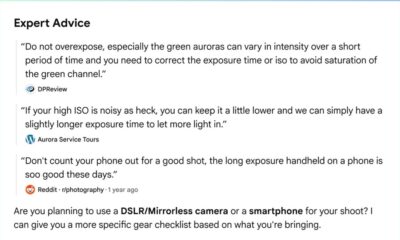

Generating images with Google’s Gemini app can lead to unexpected and controversial outcomes. This powerful tool, which now fuels the Nano Banana Pro image generator, has the potential to create misleading and harmful content.

Despite the platform’s efforts to filter out inappropriate content, users have found ways to circumvent these restrictions. The lack of strict guardrails raises concerns about the misuse of generative AI technology, especially in creating images that depict sensitive and controversial subjects.

The ease with which users can manipulate the Nano Banana Pro tool to generate unsettling images, such as a plane crashing into the Twin Towers or a shooter at Dealey Plaza, highlights the challenges of content moderation in the digital age. These creations, whether cartoonish or realistic in nature, have the potential to spread disinformation and incite controversy.

Furthermore, the app’s compliance with requests to generate disturbing scenarios, like the White House on fire or characters in historical tragedies, underscores the need for stricter guidelines and oversight in AI content creation. The implications of these easily accessible tools for creating misleading or offensive content are significant.

While the images produced may not depict graphic violence, they still raise concerns about copyright infringement, historical accuracy, and ethical implications. The potential for misuse of AI-generated content highlights the importance of responsible use and regulation in the digital landscape.

-

Facebook7 months ago

Facebook7 months agoEU Takes Action Against Instagram and Facebook for Violating Illegal Content Rules

-

Facebook7 months ago

Facebook7 months agoWarning: Facebook Creators Face Monetization Loss for Stealing and Reposting Videos

-

Facebook5 months ago

Facebook5 months agoFacebook’s New Look: A Blend of Instagram’s Style

-

Facebook7 months ago

Facebook7 months agoFacebook Compliance: ICE-tracking Page Removed After US Government Intervention

-

Facebook5 months ago

Facebook5 months agoFacebook and Instagram to Reduce Personalized Ads for European Users

-

Facebook7 months ago

Facebook7 months agoInstaDub: Meta’s AI Translation Tool for Instagram Videos

-

Facebook5 months ago

Facebook5 months agoReclaim Your Account: Facebook and Instagram Launch New Hub for Account Recovery

-

Apple7 months ago

Apple7 months agoMeta discontinues Messenger apps for Windows and macOS